The Julia McCoy Method: How to Scale YouTube with AI Avatars

Julia McCoy grew a YouTube channel to 250K subscribers in 18 months using an AI avatar. Here is her exact pipeline — and how I turned it into a reusable Claude Code skill.

Julia McCoy does not record her YouTube videos. An AI avatar does it for her.

Her channel "Dr. McCoy" hit 250K subscribers and 2 million monthly views in 18 months. The avatar gets 3.8x more views than her real-face videos ever did. Her click-through rate sits at 7.8%. Average watch time: eight minutes.

I spent an evening pulling apart how she does it and turned the whole method into a reusable skill anyone can install in Claude Code. Here is what I found.

The pipeline

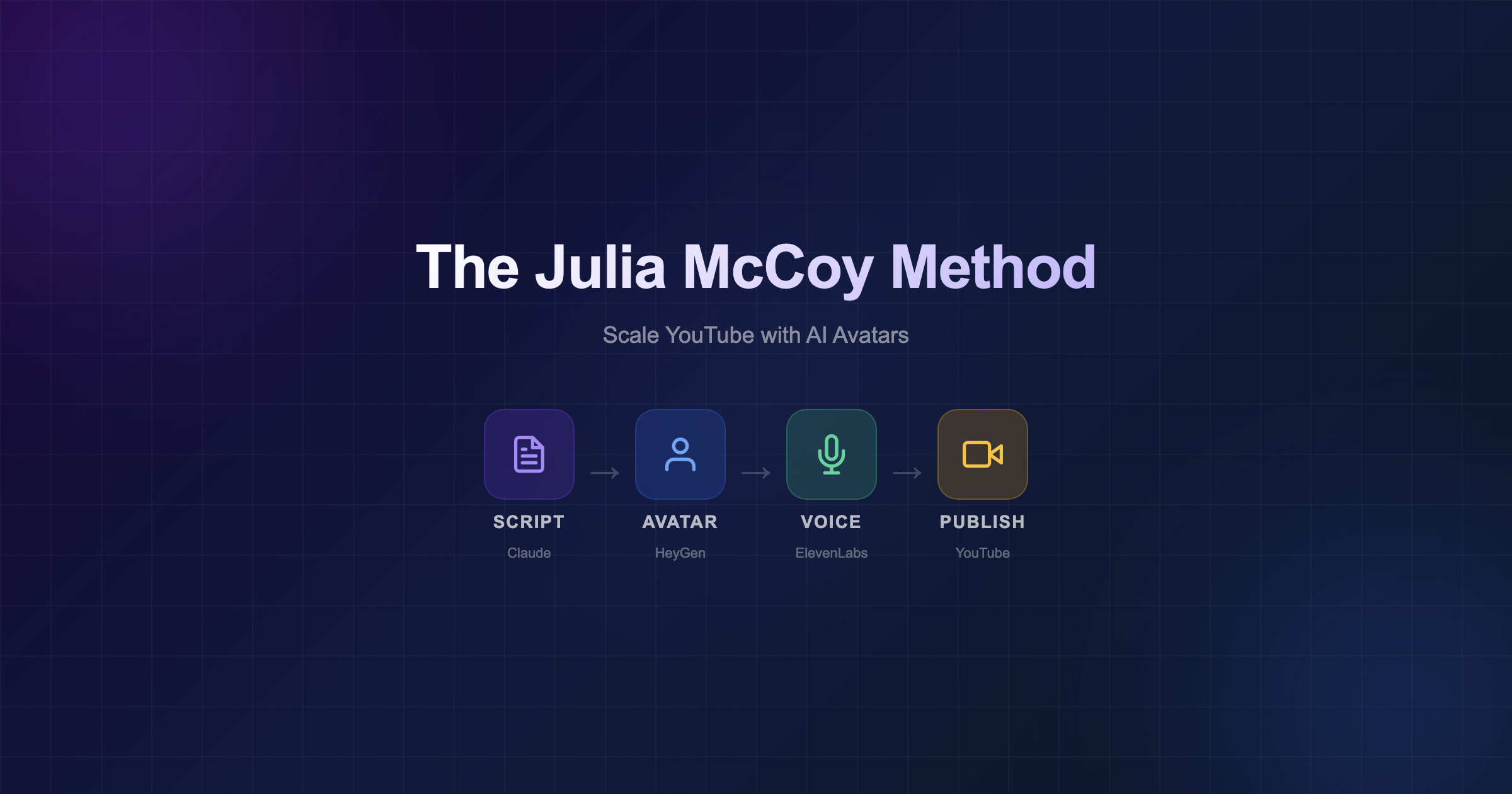

The method is a four-stage pipeline:

Script (Claude) → Avatar (HeyGen) → Voice (ElevenLabs) → Edit → Publish

Each stage has one job. No stage depends on creative energy. That is the point — it turns video production from a performance into a process.

Stage 1: Script with Claude

Julia writes all her scripts with Claude, trained on her own viral content. She feeds in her top-performing videos so the AI learns her voice, her pacing, her hooks.

The script is the only stage that requires thinking. Everything after it is execution.

A good avatar script needs:

- A hook in the first 30 seconds that creates curiosity

- Value delivery for 6-8 minutes

- A clear CTA at the end

- Visual cues baked in: B-roll notes, text-on-screen moments, transition points

If the script is weak, the avatar cannot save it. If the script is strong, the avatar disappears and the content takes over.

Stage 2: Avatar with HeyGen

HeyGen generates a digital twin from training footage. Julia spent over 25 hours refining the data to make her avatar look and sound real.

Her advice on training data: clean footage, consistent lighting, same camera angle, no jump cuts, same microphone throughout. "You are literally teaching the AI who you are."

The minimum is 2-5 minutes of high-quality source video. But more is better. The avatar learns gestures, micro-expressions, head tilts — the small things that make a talking head feel human.

Stage 3: Voice with ElevenLabs

The voice clone is trained on about two hours of Julia's own audiobook recordings. Clean audio, no background noise, natural speaking pace.

This is where most people underestimate the effort. A bad voice clone sounds robotic regardless of how good the script is. The training audio needs to sound like a person talking, not a person reading.

Stage 4: Assembly

A production team of five people assembles the final video — avatar footage, voice, B-roll, captions, thumbnails, metadata.

One detail that surprised me: Julia uses real photos for thumbnails, not the avatar. The avatar delivers the content. The real face sells the click.

Why it works

The obvious answer is scale. She can publish daily without burning out.

But the deeper answer is consistency. The avatar never has a bad day. It never fumbles a sentence. It never needs three takes for one paragraph. The quality floor is higher than most humans can sustain.

And the audience does not seem to care. Her first cloned video had the most engagement of anything she had ever published. People watch for the content, not for proof of biological presence.

The skill

I packaged the method into a Claude Code skill with four modes:

| Mode | What it does |

|---|---|

| script | Write a full video script with hooks, timing, B-roll cues |

| plan | Design your avatar video pipeline from scratch |

| audit | Check if you have everything needed to start |

| optimize | Improve an existing script against Julia's benchmarks |

Install it:

claude install-skill https://github.com/razbakov/skills/tree/main/skills/julia-mccoy-method

Then use it:

/julia-mccoy-method

The skill knows the benchmarks (7%+ CTR, 8+ min watch time, 3x view improvement), the training data requirements, the cost breakdown, and the full production checklist.

What this means for creators

The barrier to YouTube is no longer "I do not want to be on camera" or "I do not have time to film." The barrier is now: do you have something worth saying?

Julia's method proves that the content matters more than the delivery mechanism. The avatar is a tool. The script is the product.

If you have expertise, a point of view, and two hours of clean audio — you have a YouTube channel.

Cost breakdown

| Tool | Cost/month |

|---|---|

| HeyGen (Business) | $89-199 |

| ElevenLabs (Creator) | $22-99 |

| Claude (Pro) | $20 |

| Video editor | $0-500 |

For under $500/month you can publish daily. Julia's channel generates significantly more than that in revenue.

My take

I am not building a YouTube avatar channel. But I am building a video delivery platform for dance festivals, and this method is directly relevant to how we think about content at scale.

The principle is the same whether you are making educational videos or marketing clips: separate the creative work (scripting) from the production work (filming, editing), and let AI handle the production.

The creative work still needs a human. Probably always will. But the production bottleneck? That is gone.

Have thoughts about this post? Let's discuss it on X!

Alösha

Building community platforms, teaching salsa, writing to find my people.

You might also like

Severance, but for AI

Why personal and work avatars need separate memory, and why they should sometimes be allowed to talk.

I Built an Executive Team of 6 AI Agents to Manage My 15 Side Projects

How I use Claude Code to run an autonomous AI agent team that handles code review, content, strategy, coaching, and community across 15 projects while working full-time.